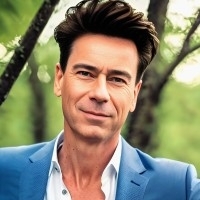

In this episode, we talked with Matt Oberdorfer, founder and CEO of Embassy of Things. Embassy of Things enables industrial enterprises to modernize and build their own operational cloud historians, industrial digital twins, and data lakes.

In this talk, we discussed how to modernize industrial data architecture to resolve development bottlenecks and simplify the path from a pilot scale. We also explored the application of generative AI to the industrial environment.

Key Questions:

- What is your perspective on the human or organizational element as a bottleneck to moving from POC to scale?

- What is the architectural element for industrial data and industrial AI?

- What is the difference between generic AI and generative AI?

Transcript.

Erik: Matt, thanks for joining us on the podcast today.

Matt: Thanks, Erik. Thanks for having me.

Erik: Yeah, I'm really looking forward to this. I think we're going to touch on some topics that are close to my business — topics around how to succeed in an IoT deployment, and also some things where I'm personally really interested in looking at the future of generative AI in the context of IIoT. So I'm really looking forward to digging into those with you, Matt.

But maybe before we go there, I would love to get a bit of a better sense of where you're coming from and how you arrived as the founder and CEO of Embassy of Things. It's always interesting to understand why somebody decided to devote themselves to a particular problem. You've been involved with — what is this — 10, 15 companies as some kind of co-founder or kind of senior executive over the past two decades. So I would love to touch on some of the highlights and just understand your thinking that led you now to found Embassy of Things.

Matt: My background is really being involved in the industrial side of analytics. I was part of a venture fund that basically started and incubated 20 plus industrial analytics companies also. In all these little startups, we're trying to get projects with one of the big manufacturing companies, oil and gas, energy, power, utilities, and so on companies. My job was to be the CTO and help them to put the technology stack together, to actually get there and be successful as an analytics company and offer the AI, the cool, new AI stuff, and so on.

What happened is that many, many times, there were successful POCs that then later on failed. The POC was like, "Oh, we achieved everything we wanted to do as a POC." But then, whatever happened next was, "Oh, the leadership decided to not actually do it," or, "The company couldn't actually put it into production," or, "It was not scalable." So I looked more and deeper into the issue. What I found was that there's a little big problem there, which is, in most of these projects, everybody involved overlooked the fact that they had to get the data for industrial AI out of systems that are basically closed off from the internet, systems that are in power plants are not easily accessible.

So if you want to create a real-time analytics system that analyzes and does anomaly detection, you can either do it there on-premise. But you cannot really do it in the cloud unless you actually pump the data into the cloud, which is not possible because you have no internet connection. But then, on-premise, you don't have the capabilities in the compute and storage power to do machine learning. Because that requires a lot of storage for all the historical data, as well as a lot of compute power.

Back when that happened, I had the idea that it would be great to, at some point, start a company that focuses on this completely overlooked problem, which is kind of plumbing. Let me give you this example. Nobody, when they buy a new house, says, "Does it also have a shower and a faucet in it with water?" Everybody just assumes, "Oh, it looks beautiful. The water is there. The faucet is there. The plumbing is there." So what happens many times is, when digital transformation project starts and analytics companies say, "Hey, we can do this cool stuff," AI companies say, "We can forecast the future," that in the first POC, they say they can do it. They get a little bit of sample data. Because the people that are really on the operational side says, "Nobody's going to touch our systems here. We're going to FTP you some sample data. We can give you a tape, or a thumb stick, or a thumb drive, or something where they have the data on. The AI company, analytics company goes off, trains their models —let's say, half a terabyte of data — comes back and says, "Yeah, we solved it. We can give you 5% of production increase or cost savings or whatever." That's the successful POC, because you demonstrated that your AI software will provide the value. We have proof of value, right? But then, to actually put that into a scalable production solution that's highly available, that does that in real-time, that's a whole different ballgame. These instances back then really triggered the idea.

Then, at some later point, I was sitting with a couple of friends in Houston, Texas and we were thinking about what company should we do next. Because I had two successful exits a year before. That idea came back. We said, "You know what? This is still one of the biggest problems that most of the companies that try that overlook." Hence you have organizational resistance between the operation side and the IT side and the security side, and so on. Let's focus on that. Let's focus on something that's the most boring thing to do which is really transport data from the operational data sources securely into the enterprise-level data lakes and databases, and then make that data make sense. Meaning, contextualize it. Be able to enrich it with this information so that, ultimately, data scientists and data engineers can use it. That way, the customer will love us. Obviously, the cloud vendors will love us, because we are basically pumping tons of data into the cloud. Cloud services are consumed. Also, the analytics companies that want to sell the AI stuff, they will love us too. Because suddenly, they can actually not only do a POC and then fail. But they can actually have a POC and then actually go into production. So that's the backstory of what got me to start this company.

Erik: Yeah, it makes complete sense. I don't know how many instances where we've either encountered this or our clients that we've been working with, and we tried to help them roll some roadmap out. Then they run into this challenge of, as you said, the very simple problems of these plumbing issues of just being able to get access to the data in a secure way that both IT and the ops guys are comfortable with. So I want to get into the company later and deep dive—

Matt: Let me just say — sorry for interrupting. But let me just say this. How I see it is the digital transformation hell, the protege of digital transformation, is plastered with successful POCs. It's just full of successful POCs that all land in POC hell, so to speak. That's the way how I perceive it. Go ahead, please.

Erik: Yeah, I know. Absolutely. So you have, on the one hand, this proliferation of successful POCs that end up failing in production. A big part of that is the technical challenge of then implementing a POC while you're getting real-time data from operating systems. There's also a lot of other challenges there.

You've published a book recently, The Trailblazer's Guide to Industrial IoT. It was interesting to me that a lot of the topics that you touched on in that book are not directly related to the technical challenge, but they're also related to the, let's say, the human, the organizational challenges getting a POC. Because these technical challenges, you could say a big part of them is also risk perception. And so there's also a human element, or there's a decision making element of, what amount of risk can we accept? Are we comfortable with cloud, et cetera? So there's always this: risk is only risk if somebody views it as such. I'm interested in hearing your thoughts on that perspective, on the, let's say, also the human element or the organizational element as a bottleneck towards moving from POC to scale?

Matt: Absolutely. I mean, Erik, this is the biggest issue of all, the biggest challenge in everything. The book is actually very different from any industrial IoT book that's out there, because it focuses not on the technology. It focuses on the human and the organizational challenges. So it's actually a book that's in two parts. The first part is written as a novel. It's a storytelling book or a story told inside the book of a typical manufacturing, a typical energy company that basically says, "Hey, we want to use AI. We want to use it to save the company. Otherwise, we're going to be left behind and so on." And so the team in the book really experiences all the different organizational challenges, also some technical ones. But the book is not talking about what is OPC UA, or can you tell me how the MQTT protocol works or whatever? It's really about that.

Some of these challenges really start with the digital strategy. Ultimately, the number one thing — I think, Erik, you know this very well. But the number one thing that has to happen in a company is there has to be an industrial data strategy. There has to be also someone who owns it. So you have to have a leader. The leader has to be able to make decisions. The leader has to have resources, money, and the power to actually build something that spans across multiple departments and multiple organizations. The second part is this person also has to be able to create a vision, articulate it, and get the stakeholders behind him. Then the third thing is there has to be use cases that have to be defined.

One of the biggest challenges is to define the first use case. Because, ultimately, the first use case will either make or break the entire project. If you pick the wrong use case, then there will be practically no second chance. So it becomes very, very crucial. Of course, without really spoiler alerting the book, what happens many times is that as soon as you start collecting use cases in discovery sessions — I call it death by discovery — everybody in the organization come up with a use case, and everybody wants their use case to be on first, of course. Then automatically, you say, "Well, which are the biggest business impact use cases? Which one has the highest return on investment or whatever?" Then you can rank them this way.

That said, if you do that, also, highly likely, what will happen is that a very big use case ends up being the top use case. The effort and money that it takes to implement that might be many, many months. Maybe even two years, because it's an all-encompassing, many departments involved. And so the risk that's filling is huge. Because only if everybody for this entire time will actually work together and play nice, it's going to be successful. So yes, it has the biggest business impact, but it also has the highest risk of failure.

So if you rank it in a different way where you say, "Well, let's also say what's the time to value?" You mentioned this earlier, Erik. But the time to value meaning, how fast can you really deliver value directly back and show to the leadership of the company? Hey, we implement this project. It costs, let's say, half a million dollars, but it saves you a million dollar per year from now until the future or until the end of reoccurring." Once you have done that, once you have successfully put a use case in production even if it's small one, showing that you can do it, showing that it works, then you can tackle a bigger one and a bigger one and a bigger one.

A friend of mine said earlier in another podcast, it's like eating an elephant. So you eat an elephant with little scoops, but you cannot eat the entire elephant at once. I said, well, the first bite is a very small bite. Once that is successful, you can basically do a bigger bite of the elephant. And so ultimately, you can do all the use cases, but you have to find the right one to start with. So that's probably one of the biggest challenges initially: to get started, to do the right thing. Then there are other challenges later, which is how to actually pick the team and figure stuff out.

Erik: That's very well noted. So we're often employed to do use case road mapping and do this type of prioritization. A couple of the factors that we are always very attentive to are, how many stakeholders are involved in a particular use case? How many people is it touching? How many different data sources do we have to access? What is the risk, perceived risk? If this fails, then what happens? Then you start to identify that there's usually a handful of use cases that are maybe not going to revolutionize the business, but they're just touching a couple stakeholders. They just need data from a couple sources. If they fail, they don't really cause any problems. You'll say, well, these ones, we can get these off the ground, right? We can get these off the ground. We can generate a reasonable ROI. Again, it's not going to be maybe incredible, but it's going to be a reasonable ROI. Then the organization can look at it and say, "Okay. We did that." Through that process, you start to build up some of the infrastructure and some of the internal know-how and so forth. Then you can be more ambitious.

Matt: I'm so glad you say that, Erik. Because this is basically where the organization also starts to learn and change. What I mean with this is — actually, what you said, and you guys are doing it just perfect — if you start with a use case that you can show to the organization and say, "Look, we did this. It works. This is the impact. This is the value," then the people inside the organization of the bigger use cases that could have been resistant before know two things. First of all, it's going to happen. This happened already with the other organization, so it's not like we can stop it. So the resistance level will go down. Because if everybody looks at the first deployment of a successful solution and said who wants to be part of the next one and who wants to be against it, suddenly you have way, way less resistance in your organization than if you start with the big elephant and try to eat it all at once. And everybody's like, "No, it's not going to happen." So I love exactly what you said there.

Erik: There's another perspective here which I'm really interested in hearing your thoughts on, which is the top-down versus bottom-up question. In part, that's a strategic question of, do we want to have an organizational effort to define what our approach is and then have this multi-year roadmap? Or do we want to allow people that are closest to the problem to just ideate? So there's an organizational element. There's also an architectural element.

So if you do a top-down thing, then you start really thinking about what is the architecture that we need to have in place to make all this happen, which puts a lot of delay. It puts your focus on the architecture upfront. If you have more of a bottom-up use case, then you can get things done much faster. But also, you might end up hacking things together. Then five years later, you figure out, "Oh, my god. What have we done? We've created this Frankenstein of 100 different use cases that don't talk to each other." So I guess there's tradeoffs either way, but I'm curious on how you think about approaching that problem.

Matt: This is kind of my deal. That's my day job, exactly this discussion specifically about architecture. For me, first is the use case. The use case drives the architecture, not the other way around ever. If I see people that go on a whiteboard, and they start putting little logos of, let's say, a cloud service on there — let's say, Kafka. Kafka can solve the world or whatever — that's already a recipe for failure. Because what is the use case? Why do you need Kafka? Why not some other published subscriber or type of service that is offered to someone else? It's because that person is very familiar with Kafka, and that person believes that Kafka — I'm talking about Apache Kafka to be specific — will solve the problem. It might be a good candidate. But for me, architecture starts at a much, much higher level.

When you start drawing an architecture that actually has particular system or components in it, you're talking about a logical architecture. Not a fundamental architecture that actually describes really the modules. You're already talking about a particular technology candidate that you have in mind that solves a particular problem of some architecture that you think is the right one. From my perspective, to actually build an architecture, you first select the use case. Maybe it's two or three use cases. Then you really go and innovate top-down approach to identify, without really having any technology in mind, the particular modules.

Let's say, you have a data ingestion and contextualization layer. That means data comes in from different data sources. It's not only time series data or log files or whatever. It also has meta information about the assets, where they are, how they are fitting together, how they are rolling up into a business unit, how they are reflected in a hierarchy, in a tree. How can you actually search through the tree? How can you select different types of the same asset for later queries? So you have context to it. You want to build a layer that brings that data together in one single source of truth. So that could be one layer.

Another layer could be, where do we want to have that data to be accessed? Who's going to access it? Is it going to be business users that want to run, let's say, analytics? Is it going to be operators that actually have some applications that run analytic systems to actually operate machinery? It depends. Where these people are, it's where the actual data sits, where it has to be accessed and where it should be. So you have the data access layer. You have the data ingestion, curation, contextualization layer. So you're building an architecture of these components. Each of these components, then you can say, what candidates, what technologies, what cloud services, what systems, what applications, what third parties are out there.

You can say Kafka is one of them that would fit the bill to do this in this particular module. But there are also probably 15 other ones that are still doing similar stuff. Then it becomes more, like, "Should we use this one or that one, because we have somebody who already knows how to do this," or, "This one is cheaper," or, "This one is faster,' or whatever. The logical architecture where you then actually really go down on the component level and say, okay, for ingesting data, we're going to use this particular service from a cloud. Let's say it's AWS Kinesis versus Kafka or whatever. Then you get to that level. But if you start with that level, we have a problem.

So it's kind of top-down and then drives more down and more down until you are really ending up at what is the physical architecture, which really is IP addresses, port numbers, protocols and all. That comes last out of all it. That's it. Let's comment on this. You got to do the homework to actually go from the top all the way to the top architecture, fundamental, and then go all the way down to really understand the effort it will take for you to implement it. Because only if you have reached the bottom, you'll know, okay, we're going to need so many virtual machines with that type of memory size. It's going to cost us X amount of dollar per month or whatever. It will take us so many people to set it up and maintain and operate. That will be our OpEx. So you will know only that information once you actually got all the way to the bottom. Then at that point, you know what's going to be the effort to actually get there. That's my short answer to that.

Erik: Got you. Okay. So it's like top-down from an architecture standpoint, from application down to the base layer, the more communication layers. Then from maybe an organizational perspective — there's not a right answer here — but do you feel that also top-down tends to be more effective in your experience or more bottom-up? Is this just highly case by case?

Matt: Well, okay. Very good. Yes, regarding organizational makeup of how to actually build or design the architecture, very good question as well. So who should be involved, should be in the organization? We all come together and create a joint architecture, or should it be more like a few people that actually make the decision, and so on?

My personal experience is if you try to have the organizations all collaborating, it typically ends up either being a non-workable solution, because everybody drives their own agenda. The SAP team wants to be still the owner of the single source. That's SAP. The operational guys think the single source is the operational data sources. The IT guys think they have stuff, it's the thing that should be where everything is. It's not wrong of these organizations to do that, because they are measured and that's their job. That's their role, so they're driving it this way.

But if you try to say, "Oh, you guys come all together and freely come up with — forget your day job and build something new, which might actually even eliminate some of your responsibility and even organizational power. Give it up and do something else," very hard. Very almost impossible. What happens? The first one is it's not going to happen at all, because they just can't do it. The second one is, you end up in some sort of limbo state where everybody agrees and gives a little bit here and there. And so you end up with something that is not scalable. It's not workable. It involves everybody in the grand matter. So it's a solution. Everybody agrees to it. But it's like you have all the countries of the world agree on one thing. If they finally agree on the thing, it's like so watered down. That's not going to be helpful for anyone, right?

So my personal experience is you have to have someone from either the outside or from the inside key stakeholders in a smaller group. However, those people have to be able to understand the challenges that are involved in getting SAP data, in getting OT data, in getting IT data, knowing what an MES system, an EIM system, a data historian, SCADA, what these all are and why it's a problem to get data. They have to have a detailed knowledge on the lower side of the architecture but, at the same time, being also completely able to understand the business impact — talk the language of the CEO, executives, and so on, to articulate the vision and bring this all together. If you have that smaller group of people together and they can fully understand end to end what it takes, and that leads to a vision that can be then supported and sponsored by the executives including the CEO, that's more likely to actually succeed than if you just have all organizations doing a kumbaya.

Erik: Yeah, that's a great analogy. There are so many problems that the United Nations can solve. Most problems need to be solved by nations, maybe by cities, maybe by neighborhoods. But you have to move down to the root of the problem if you really want to understand it.

Maybe this is a good point to introduce Twin Talk, your flagship product. Then you have a couple others, Twin Sight and Twin Central, which I'm interested in understanding. It sounds like they are designed — correct me if I'm wrong here — to intermediate between these. On the one hand, having a platform that, I guess, could be used across the organization but on the other hand allowing people to plug into that platform and work from more of a bottom-up use case-centric perspective. Help me understand where does this fit into the architecture stack. I guess companies all have a lot of legacy stuff that they're building on. So how does it fit in there? Then what's the value proposition? What's the before and the after effect that you're trying to achieve with Twin Talk?

Matt: Oh, absolutely. What we have created our product suite is very simple and yet kind of complex. But the simplicity is we want to — our products, these are software products. It's not a service. It's not a Software as a Service. It's literally a software product to solve some of these key connection and ability to contextualize data and visualize it. So we call it the Industrial Data Fabric because it's like you can weave together your architecture. Our products can fill some of the gaps that typically happen. Our products are not solving the entire thing for a company and doing it all. They can help to bring together other components and make it whatever you want it to be. It's like a fabric. So you can do this.

The first product we have is called Twin Talk. Twin Talk is simply to get the digital twin and the physical twin to talk to each other. Hence, Twin Talk. That's the easiest way to describe it. The physical twin are all the operational data sources, including SCADA systems, different type of SCADA systems, different type of operational protocols, data historians, anything that sits basically in the level one and level two Purdue model which is a Model S. This describes which layer or which level of systems can talk to which other layer. It goes basically from the lowest level, which is really at the physical sensor level up to the enterprise network. There are different security layers in between.

Twin Talk basically allows to bridge these security layers and get data from the very secure sensor network to an enterprise level database or data lake. While it's doing this, it literally can contextualize the data and bring not only the time series sensor data up, but also all the information about the sensors. That's really what Twin Talk is all about.

Erik: Just to clarify one point there. Then the digital twin is going to be provided by some other vendor. Is that right?

Matt: Yes, perfect question. Thanks, Erik. So the digital twin can be provided by a different vendor. We just actually announced Twin Central to be integrated with TwinMaker. TwinMaker is a cloud service from AWS that allows you to actually create a digital twin. It's a cloud service that you can create hierarchies, you can create assets. The assets can consist out of — let's say, you take manufacturing side. It has all the different boilers, conveyor belts, whatever is in the manufacturing. It also has the ability to fly through the manufacturing side in 3D, and so on. Basically, with this integration, we've integrated with this AWS service. That's the single source of truth for the digital twin.

Erik: Okay. Clear.

Matt: That integration is with our second product. TwinMaker from AWS is actually integrated with our second product Twin Central. Twin Central is basically a solution that allows you to literally take the meta information and a hierarchical information from different data sources, and create one enterprise-wide model of your asset tree in hierarchy. Twin Central allows you to manage your digital twin, but it is not the source. The data source is really, in this case, the TwinMaker service. But Twin Central allows you to manage, decide which data source, where is information, let's say, in your SAP system. Is the hierarchy in your MES system is another hierarchy? You want to build a hierarchy that has multiple sites from multiple systems, and reflected in real time and give that to an organization that uses, let's say, Power BI. People just want to navigate through that tree. That's what our second product Twin Central can do.

Erik: Okay. Clear. So you have Twin Central. You also have Twin Sight which is a no-code app builder. So that's for companies that want to build apps on top of Twin Talk. Is that then building fully deployable apps, or is this more of a toolkit for testing out concepts? How deployable is this at scale?

Matt: Yeah, it is a separate app, a separate software package that basically allows you to visualize data from different systems. What I mean with this is they are different from, let's say, Power BI or, Grafana, or some of the other visualization tools that are out there. Twin Sight is really designed to allow you to take data from an operational data source and show you, let's say, the flow rate of an oil well that comes directly out of the OT data source and then combine that, let's say, with logistical data that comes out of EIM system. Then basically be able to click through these charts and pull data from completely siloed different data sources. So you have a user interface that gives you an overview and shows you one picture. But underneath, you have actually so many different data sources that it taps into. It's completely transparent for the use of the unit. It basically creates apps that do that.

Erik: Okay. Clear. So this is your software portfolio. You also have this Asset Catalog Studio which is around managing physical assets, sensors, et cetera. Is this more of a supporting middleware solution? How does that fit into the architecture?

Matt: The Asset Model Studio is a supporting function. It's actually funny. It was developed because a customer needed it, to be frank here. It's a problem that appears in many customers later when they actually get into production, which is this. Let's say you actually build successfully a data lake, and you get all the data in of, let's say, 20,000 assets. Then after you did that, you take all the data, and you train your machine learning models. Everybody's happy and all is in production. But then, the operational side, let's say, buys a new set of assets or sell some. Or, worst case scenario, they actually decide that the naming convention that they had so far is wrong. They want to change all the names of all the assets because they just want to standardize it or something. Now you have this following problem. If they do this, if they change all the names on the operational side, then all the namings and all the stuff that you've built inside your IT site, inside your data lake, is going to be wrong because you have the old data in there.

So how do you solve this problem where you can change the naming of assets on one side but still have the names on the other side? When we create it, it's basically what's called an asset catalog manager. What you can do basically is you can allow the operational part of the company to pick the names, change them, and so on and so forth. But at the same time, you can define how these names are projected into an IT system, so that even if there's any need for changing names, naming conventions, and so on, it will not disrupt or change anything that you have built or want to build for your predictive analytics, for your business applications and for your reporting and your dashboards. It all stays the same. That's the asset model catalog.

Erik: Okay. Great. So I think that's a good overview of the product. Maybe you can walk us through one or two customer cases, just an end-to-end perspective of who you're working with. What were the problems that they were having? I think it's a good way to help us understand what this actually looks like in practice.

Matt: Absolutely. One of our biggest customers is BP, specifically BPX in North America. We have done a number of public use cases with them. You can actually Google — BPX, EOT and AWS. You'll find an architecture and so on. Just about the use cases here and what we have implemented here, the need here is to really bring data from the SCADA systems and from the historians into an industrial data lake. Industrial data lake has many, many different use cases inside BPX that I cannot go into what the exact use cases are. But it grew. When we originally started to work with BPX, we started with a small amount of use cases. Over time, it became more use cases. Then once we did the public case study and all that stuff, it became clear how big this really is for us. With BPX, we went through many of the milestones or all of the milestones that you need to go from having the first POC, where you'd really try to test out some stuff, to actually deciding the full-blown architecture and putting it in production for a billion-dollar business.

The other one that I also want to mention here real quick, because it's related but very different, is a company called Hilcorp. They are basically the biggest privately owned oil and gas company in the United States. Hilcorp is super interesting because their business model is to buy basically assets that are end of life. Basically, you take an oilfield and say, "Well, nobody wants this anymore because it's the end of life. It is oil field." But Hilcorp, they actually make it bloom or make it produce even more energy and oil than it ever has before, even though nobody else will be able to do it. So they're like magicians in a way. At the same time, from a challenge perspective or what's important is that the people inside the company are able to use analytics and are able to understand really the lifespan and the health of their assets really well. Because they have to be better literally almost than anyone else on the planet to make that kind of miracle happen.

For us, we basically built architecture, the entire digital twin data lake. This is also a public use case that you can Google or maybe even ChatGPT it. You'll see the different kind of components, the different type of data sources that we brought together to actually make this happen. One point in this architecture is that the data lake that is used is Databricks. So we actually have Apache Spark-driven real-time analytics system that basically processes the industrial data and really scales. It really can go tens of thousands of assets and process machine learning models to give the operators insights and help them that you couldn't do before, that didn't even exist before. We really created a breakthrough milestone for them.

Erik: So you have quite a strong focus on energy. Is that because of unique needs in the market, or is that just the need as a business for you to just focus on a particular segment?

Matt: Well, I just mentioned two energy companies. We also have manufacturing. But the truth is that we started in energy, so it's our biggest segment. Currently, today, actually, we're at the Hannover Messe in Germany announcing our Twin Talk GPT, which is really focused on manufacturing specifically. For us, really, it's energy, manufacturing, and transportation. These are the three industrial sectors that we are focused on. But we really started in energy.

Erik: Got it. I would love to touch on this Twin Talk GPT. Actually, this is a topic I've been looking at a lot, this concept of knowledge management. I don't know how you're looking at this. We're looking at it from a perspective of being able to provide people on maintenance engineers, et cetera with an interface for accessing relevant information to help troubleshoot maintenance issues and so forth. But how are you looking at GPT and integrating it into a factory environment?

Matt: First, let's define from what we see and what we do the difference between generic AI. First, let's look at this topic and define the difference between generic AI and generative AI. Because AI has been around. It already has been a hot topic. Now we have generative AI and everybody's like, "Wow. This is the biggest breakthrough ever." But what is the difference? What's now so different? For us, it is the ability to learn from a data set to create machine learning models that learn. Instead of just analyzing the data, you're able to actually generate data. That's, for us, the big difference.

The traditional AI neural networks, the Hessian networks, any other neural networks, recurring neural networks are able to learn and be able to understand, let's say, time series data, which is really an important part about sensors — language. Language models don't really matter that much. Then the generic AI can detect anomalies. But with generative AI, it can basically produce events that are the anomalies. So I'll give you an example. Let's say, you want to create a new predictive analytics application, or you want to do a proactive maintenance system. If you want to train a machine learning model to be able to actually do predictive analytics or maintenance, you're going to have some data that has bad events, things that go wrong. You need data that actually has the things that should detect. And so what you can do with generative AI is you can take, let's say, data from 20,000 assets over the last 10 years. Train a model. Then you create an asset that's a generative AI transformer model. That's the important part. This trained transformer model will be able to simulate any type of event you want it to simulate. You can say, "Well, what if this breaks down? Simulate the data of the sensors when this thing breaks down, or this valve shuts off, or somebody falls onto the conveyor belt, or an earthquake happens, the whole thing is in flames or whatever." So you can basically tell the generative AI to create these events by command. You can basically tell it to produce the data for this particular event.

The other part is it gives you a level of certainty that the simulated data is actually accurate. So if you say as well, "This is what happens if somebody falls on the conveyor belt," it also will say, "Well, we are uncertain or certain that this is accurate. This is an accurate representation of what really will happen." It's like with ChatGPT, which is language-based transformer model, it can tell you an answer to a question. It might be wrong. They call it hallucinations. The same thing can happen if you do this with sensor data. It could be wrong.

However, in generative AI models — which is not typically shown when you actually use ChatGPT, but maybe they do this in the future — it actually knows and has a sense of how uncertain this answer actually is. So it could say, well, I'm giving you an answer. It's 10% certain that's actually true. Internally, it knows this. It still gives you the answer. It doesn't tell you this right now. But for us, we basically built a time series-based generative, pre-trained transformer system into our product. Now, what does it mean? It means that you can actually train out these models or train a model with the data that flows in from industrial systems. Then you can switch off the real data and say, "Okay. Continue to deliver this data." While it's delivering real-time data, you can also steer it and say, "Now create a downtime event." Then you can test if all your predictive analytics applications actually catch it, that they set off the right alerts, that it sent an email or text message to the right people. It enables you to not just create a recorded real-time failure, real-time data.

Generative AI can create events that are going beyond what it actually has just learned. It's not just a playback version. It actually can create new events that have never been actually learned from historical data. So that's really the breakthrough that will enable and accelerate any type of testing, digital acceleration of pre-emptive maintenance, proactive intervention. Any of these kinds of applications or systems will benefit from it.

Erik: Okay. Super interesting. Was it right? You're announcing that just recently, right? Is it this week that the announcement is going out or after?

Matt: Yes, it's actually today. So it's coinciding with this. Yes, it's this week.

Erik: Okay. Great. Well, we'll have to have another podcast maybe in a year. I'd be really interested in seeing how this ends up being adopted. I think this is an area where everybody's bringing product to market, basically, four months after GPT launched or so. So I think the next year, we'll yield a lot of innovation, a lot of learning on where the value is here. So I would love to have a follow-up conversation then.

Matt: Absolutely.

Erik: Great. Matt, thanks so much for taking the time. I guess maybe just the last question from my side then would be, if one of our listeners wants to follow up and have a conversation with you or your team, what's the best way for them to reach out?

Matt: The best way would be to hit me up on LinkedIn. Matt Oberdorfer on LinkedIn. It's easy. Just send me a message.

Erik: Awesome. Thanks, Matt. I really appreciate your time today.

Matt: Well, thank you, Erik, for having me.